Why organizations need a 90-day on-ramp to AI governance – not another comprehensive blueprint

The most dangerous AI governance strategy in 2026 is the comprehensive one.

Not because comprehensive governance is wrong — it is, in fact, exactly what most organizations will eventually need. The danger lies in its unachievability for the organizations that need it now. The NIST AI Risk Management Framework, the EU AI Act, ISO 42001 — each is rigorous, well-designed, and built for an organization that already has dedicated risk teams, cross-functional governance councils, and years of institutional muscle memory. They are designed for the organization you want to become, not the organization you are today. And while you spend eighteen months becoming that organization, your AI systems are making decisions about customers, employees, and capital with no governance at all.

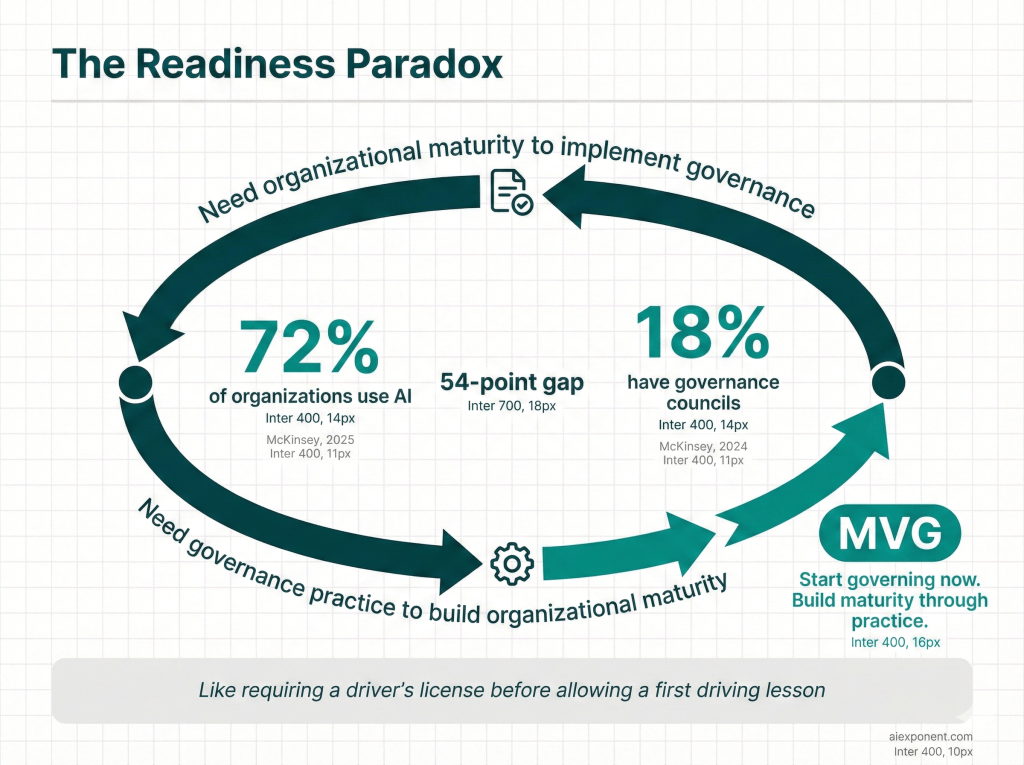

The numbers confirm the paralysis. McKinsey’s 2025 Global Survey reports that 72% of organizations now use AI regularly. Yet McKinsey’s own 2024 survey found that only 18% have established an enterprise-wide AI governance council with decision-making authority. Even accounting for the gap between survey years, the distance between deployment velocity and governance maturity is striking — and by all available evidence, widening. Deloitte’s 2026 State of AI in the Enterprise report found that 44% of organizations have teams deploying AI systems without any safety oversight at all.

I call this the “Readiness Paradox” governance frameworks require organizational maturity that only comes from the practice of governing. Like requiring a driver’s license before you are allowed to take your first driving lesson. You cannot build governance muscle by reading about governance. You build it by governing — starting with something small enough to actually do.

The question is not whether you need AI governance. The question is whether you can afford to wait until your governance is perfect before you start governing.

The Implementation Gap

The distance between “we need AI governance” and “we have AI governance” is not a knowledge gap. What separates them is an implementation gap — created by three specific traps that comprehensive frameworks inadvertently set.

The Documentation Trap. NIST’s AI Risk Management Framework and its Generative AI Profile together span hundreds of pages. ISO 42001 adds another layer. The EU AI Act introduces risk classification requirements that 40% of enterprise AI systems cannot clearly satisfy, according to a 2025 analysis by appliedAI, a Munich-based AI research initiative, which examined 106 systems. Before an organization deploys its first governed AI system, it faces a documentation mountain that would challenge a dedicated compliance team — let alone a mid-market enterprise whose “AI governance team” is the CTO wearing a second hat.

McKinsey’s 2024 survey found that only one-third of organizations require GenAI risk awareness as a skill even for technical talent. That statistic captures the Expertise Trap in a single number. Comprehensive governance frameworks assume specialized roles — AI risk officers, algorithmic auditors, fairness testing engineers — that most organizations simply do not have. Gartner’s 2025 research found that 69% of organizations suspect employees are using prohibited public generative AI tools, yet only 28% of their employers have clear usage policies. The expertise to write those policies, let alone enforce them, does not exist in most organizations.

A governance design that took twelve months to build may be outdated before its first review cycle completes. That is the Perfection Trap — the assumption that you can “design complete, then implement” in a domain where AI capabilities change quarterly and new model architectures emerge monthly. This model assumes certainty in a domain defined by uncertainty. A governance design frozen in time cannot govern technology in motion.

The traps produce real failures — most of them the quiet kind, where organizations simply never start. But the loud failures are instructive in a different way. They show what happens when AI deploys into a governance vacuum. Consider Air Canada.

In November 2022, Air Canada’s website chatbot told a bereaved customer named Jake Moffatt that he could book a full-fare flight and apply for a bereavement discount within 90 days. That advice was wrong. When Moffatt applied for the discount, Air Canada denied it and argued the chatbot was “a separate legal entity responsible for its own actions.” In February 2024, the British Columbia Civil Resolution Tribunal rejected that argument and ruled Air Canada liable for its AI’s outputs, as reported by the American Bar Association.

Air Canada paid roughly CAD$650 and lost a chatbot. The average organization pays far more. EY’s 2025 AI Governance Survey found that 99% of organizations surveyed reported financial losses from AI-related risks, with an average loss of US$4.4 million per company. Meanwhile, shadow AI — employees using unauthorized AI tools without organizational visibility — now accounts for 20% of all data breaches, carrying a cost premium of $4.63 million compared to $3.96 million for standard breaches, according to IBM’s 2025 Cost of a Data Breach Report.

Organizations are not failing at AI governance because they reject it. They are failing because the available frameworks ask them to become something they are not — yet — before they can begin.

The On-Ramp: Minimum Viable Governance

NIST tells you what to govern. MVG tells you how to start.

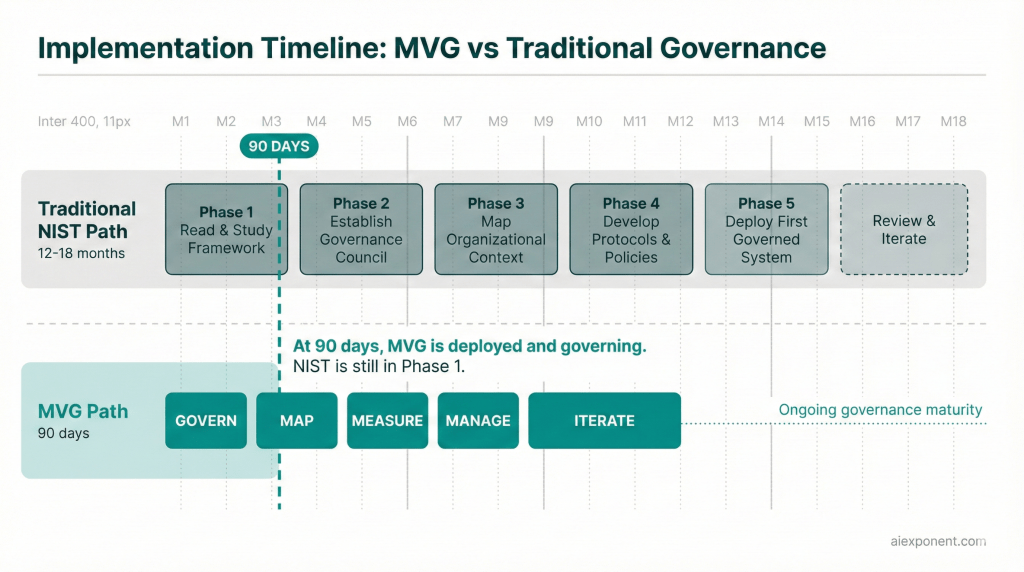

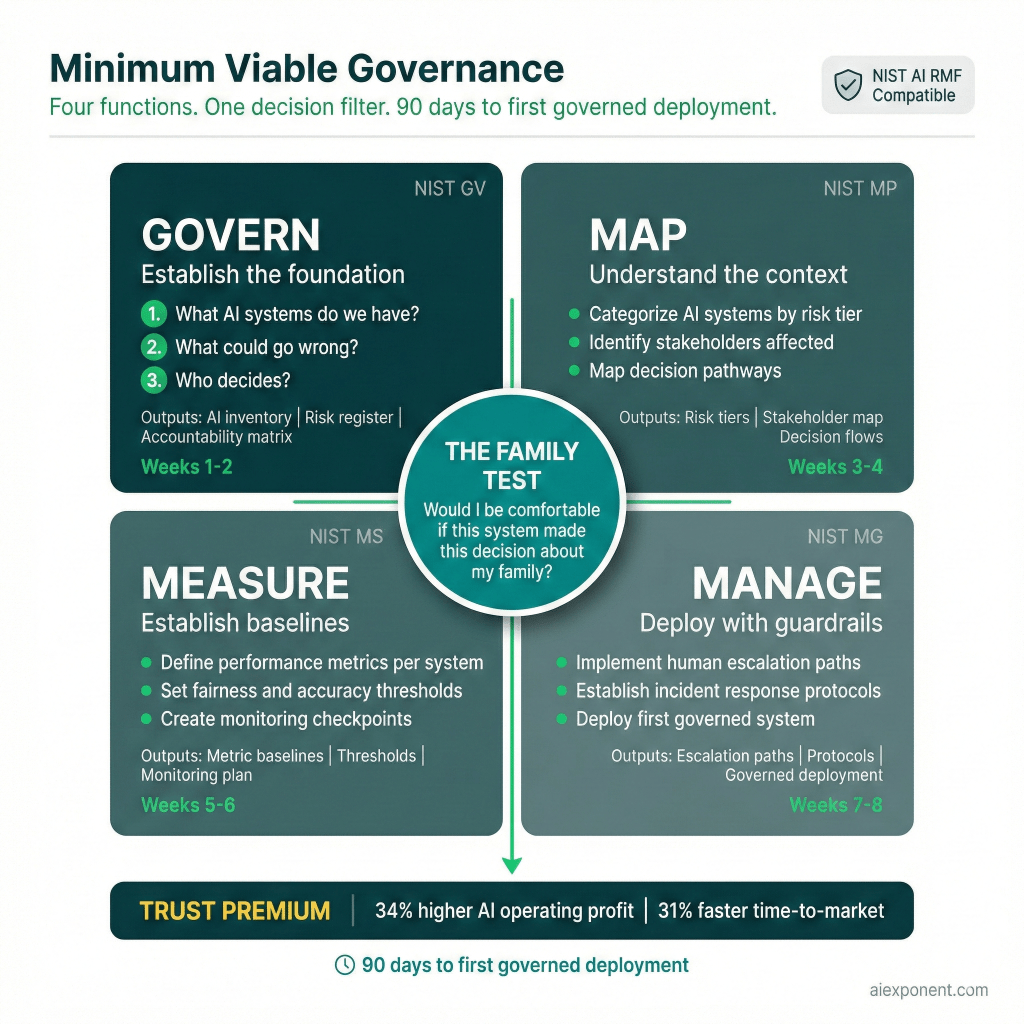

Minimum Viable Governance is not a competing framework. It’s an implementation architecture built on the same four core functions as the NIST AI Risk Management Framework — Govern, Map, Measure, Manage — with a fundamentally different philosophy: start governing now, with the smallest complete structure that works, and build maturity through practice rather than planning.

The distinction matters. MVG’s timeline is 90 days to your first governed AI deployment — not 90 days to full governance maturity. That distinction is critical. Full maturity takes years, and it should. What should not take years is the decision to start. The 90-day clock produces a functioning governance structure around your first AI systems: a governance charter, a risk inventory, measurement baselines, and deployment protocols with human escalation paths. Comprehensive? No. It was never meant to be. What it does is exist — as a living, functioning structure, not a planning document.

Buying a gym membership does not make you fit. Neither does buying a governance framework make you governed. Fitness comes from showing up, doing the reps, building muscle through practice. MVG is the first workout — not the training plan for the Olympics, but the session that gets you off the couch. And that matters because consultants, however skilled, can transfer knowledge but cannot transfer organizational muscle memory. Governance maturity means having the reflexes to do it under pressure: the cultural willingness to pause a deployment, the escalation habits when a model behaves unexpectedly, the cross-functional coordination when a risk surfaces at 4 PM on a Friday. Those reflexes come from practice, not from a deliverable binder.

The difference becomes concrete fast. Take GOVERN — the first and most foundational NIST function — and see what MVG does with it.

NIST’s GOVERN function has five subcategories: policies and procedures, accountability structures, workforce diversity and AI expertise, organizational risk culture, and legal and regulatory compliance. Implemented comprehensively, these represent months of cross-functional work. MVG’s GOVERN phase asks three questions, answerable in two weeks:

1. What AI systems do we have? Build an inventory. Not a comprehensive data lineage map — a list. Which AI tools are in use, who uses them, and what decisions do they influence? Gartner’s 2025 research found that 69% of organizations suspect employees are using prohibited public generative AI tools. You cannot govern what you cannot see.

2. What could go wrong? A customer-facing chatbot giving wrong advice. A hiring algorithm screening out qualified candidates. A financial model producing biased credit decisions. For each system, identify the three most consequential failure modes — not an exhaustive risk taxonomy, but the three scenarios that would keep the CEO awake.

3. Who decides? Assign a governance owner for each system. Not a committee — a person, by name, with authority to pause a deployment if something goes wrong. IBM’s Institute for Business Value found that CEO involvement in AI governance jumps from 28% in typical organizations to 81% in those with mature oversight. That jump happens when someone takes ownership and starts escalating real decisions.

Those three questions produce three artifacts: an AI system inventory, a prioritized risk register, and an accountability matrix. Those artifacts are a proper subset of what full NIST GOVERN implementation requires — the same documents at an earlier maturity stage, not different documents that need to be thrown away later.

The Family Test

But architecture is not judgment.

Before any governance structure becomes operational, it must pass through something no framework can systematize: a gut check.

I call it the Family Test: Would I be comfortable if this AI system made this decision about my family?

The Family Test is a smoke detector, not the fire code. A fire code is comprehensive: sprinkler placement, exit routes, fire-resistance ratings for every material. You need it eventually. But the smoke detector is what wakes you up at 3 AM before the house burns down. Simple, immediate, and binary: safe or not safe. The Family Test works the same way — it does not replace structured risk assessment, but it catches the dangers that structured assessment sometimes misses because they are moral, not procedural. That two-second pause forces a shift from “Will this pass compliance review?” to “Should this exist in its current form?”

That pause would have stopped Amazon’s recruiting tool before it was built. Trained on ten years of male-dominated resumes, the algorithm was penalizing resumes containing the word “women’s.” Would you be comfortable if that system made a hiring decision about your daughter? The question answers itself. Amazon eventually scrapped the tool entirely, as MIT Technology Review reported in 2018. The Family Test would have surfaced the question before development began.

The Trust Premium

The Family Test and the three GOVERN questions share a purpose: they get governance out of the planning phase and into practice. But the question every executive asks next is not “Is this the right thing to do?” They already know it is. The question is: “Does it pay?”

It does. And the evidence is becoming difficult to argue with.

IBM’s Institute for Business Value reported in 2025 that companies investing more heavily in AI ethics report 34% higher operating profit from AI initiatives. That number alone should end the “governance versus speed” debate. But the evidence extends beyond profitability: PwC’s 2025 Responsible AI Survey found nearly 60% of executives crediting responsible AI practices with boosting ROI and efficiency, while a 2025 MIT study showed organizations with digitally and AI-savvy boards outperforming peers by 10.9 percentage points in return on equity.

I call this the Trust Premium — the measurable business advantage that accrues to organizations whose AI systems are governed, auditable, and trustworthy. The Trust Premium is still an emerging measurement discipline, not a solved one. The California Management Review published a framework in 2024 showing both direct ROI components (compliance cost reduction, customer retention) and indirect components (brand value, organizational culture) that remain difficult to quantify precisely. But the directional evidence is now consistent across multiple independent studies: governed AI outperforms ungoverned AI on business metrics, not just risk metrics.

The competitive dynamics make this concrete. Two companies of similar size, both deploying AI-driven customer service. Company A implements full NIST-aligned governance before deployment. Twelve months in, they are still mapping risks. Company B implements MVG. In 90 days, they have a governed system in production: an AI inventory, risk tiers, a designated governance owner, monitoring protocols, and a human escalation path. By month three, Company B is serving customers, collecting performance data, and iterating on governance based on actual operating experience. Company A is still in committee.

Obsidian Security’s 2025 industry analysis suggests organizations with mature AI governance achieve 31% faster time-to-market and 23% fewer AI-related incidents. These figures, drawn from aggregated industry surveys, should be treated as directional rather than definitive. But the mechanism is well documented: governance reduces uncertainty, accelerates decision-making, and prevents costly incidents that derail timelines. IBM’s own experience governing over 1,000 AI models yielded a 58% reduction in data clearance processing time — direct evidence that structured governance accelerates rather than impedes operations.

According to Gartner’s 2025 predictions, AI regulatory violations will result in a 30% increase in legal disputes for technology companies by 2028. The EU AI Act’s first obligations became enforceable in August 2025, with high-risk system requirements arriving in August 2026. Gartner’s 2026 market analysis projects that by 2030, AI regulation will extend to 75% of the world’s economies. Organizations that build governance infrastructure now are building trust that ungoverned competitors cannot buy.Intellectual honesty requires a caveat. MVG is not appropriate for every context from day one. Organizations in high-stakes regulated domains — healthcare diagnostics, criminal justice risk scoring, autonomous vehicle safety systems — may need governance structures that exceed MVG’s initial scope before any deployment. When IBM’s Watson for Oncology initiative consumed $62.1 million at MD Anderson without treating a single patient, as a University of Texas System audit revealed, the failure demanded rigorous clinical evidence standards, not a lightweight starting framework. For high-risk, regulated AI applications, MVG’s 90-day timeline refers to governance design before deployment, not deployment with concurrent governance. The stakes determine the sequence.

The AAA Path: From MVG to Maturity

MVG is scaffolding, not the building. Not pretty. Not permanent. But without it, the building never gets built. The natural question is: how does a 90-day governance sprint become a mature, scalable governance capability?

The answer is the AAA Framework: Assess, Align, Assure.

Assess is the MVG phase itself — 90 days to build the minimum viable structure: AI inventory, risk tiers, governance ownership, monitoring baselines, escalation paths. You now have a functioning governance organism. Small, imperfect, operational.

Over the next three to six months, Align expands what you built. The AI inventory you created in 90 days grows into full NIST MAP implementation with detailed context and impact analysis. The three GOVERN questions expand to cover all five NIST subcategories. Risk tiers evolve into quantitative measurement systems. None of this requires starting over — and that is the point most critics miss. The artifacts translate directly: MVG’s governance charter becomes NIST GV-1, the accountability matrix slots into GV-2, and the risk inventory feeds MAP functions. Same documents at different maturity levels, not different documents requiring replacement.

Continuous, auditable, automated where possible — Assure is where governance stops being a project and becomes an operating system. Gartner’s 2025 survey of 360 organizations found that those deploying AI governance platforms are 3.4 times more likely to achieve high governance effectiveness. Manual checks become automated monitoring. Exception-based review becomes continuous assurance. The organizations that reach this stage did not get here by designing the perfect system on paper. They got here by governing badly, then governing better, then governing well.

The objection that MVG “cuts corners” dissolves when you see the path. Every MVG artifact is a NIST-compatible artifact at an early maturity stage. The 90-day sprint does not create governance debt. It creates governance capital.

The path from MVG to maturity is clear. The question is whether you will walk it — and when. Because the timeline for that decision is not yours to set.

The Choice That Is Actually on the Table

Eighty-five percent of companies expect to deploy customized agentic AI systems in the near future, according to Deloitte’s 2026 report. Only one in five has a mature governance model for agentic AI. According to Gartner’s 2026 analysis, AI governance market spending will reach $492 million in 2026 and surpass $1 billion by 2030, driven by regulatory mandates extending across 75% of the world’s economies.

The governance reckoning is not theoretical. It is underway.

Here is what AI governance maturity actually looks like. Not a 244-page framework on a shelf. Not a committee that meets quarterly to review policies nobody reads. A practice — something your organization does, not something it has.

The organizations that will lead the AI era are not the ones that waited for perfect governance. They are the ones that started. And when someone asks whether their AI systems are trustworthy, they will have an answer that begins not with a document, but with a question: Would I be comfortable if this system made this decision about my family?

If you can answer that honestly for every AI system in your organization, you are governing. If you cannot, you know where to start. Bring one question to your next leadership meeting: For our three highest-risk AI systems, who — by name — is accountable for their outputs? If the room cannot answer, you have your 90-day starting point.