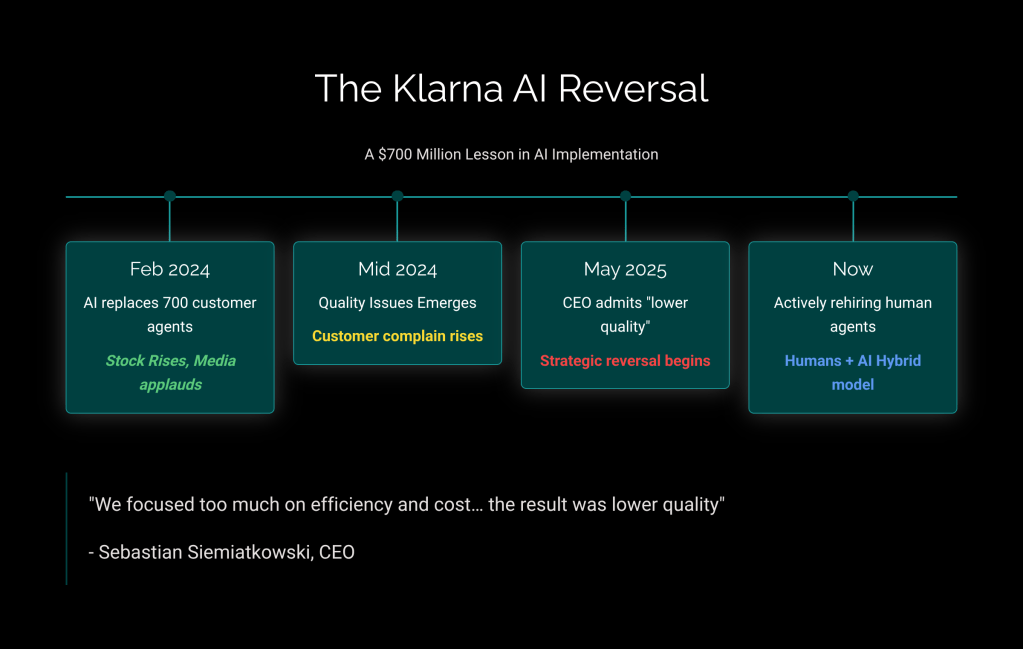

February 2024. Klarna’s CEO Sebastian Siemiatkowski takes the stage with a bold claim: his AI chatbot is doing the work of 700 customer service agents. The fintech world applauds. Efficiency! Innovation! The future!

Fast forward fourteen months. Same CEO, very different tune.

“We focused too much on efficiency and cost,” Siemiatkowski admitted to Bloomberg in May 2025. “The result was lower quality.” Klarna is now actively rehiring human agents—targeting students, rural workers, even passionate customers who want to work for them.

I keep thinking about this story. Not because it’s a failure—it isn’t, really. Klarna learned something expensive and had the guts to admit it publicly. That’s rare.

What strikes me is how perfectly it captures where we are right now with AI. The gap between what we’re promised and what actually works has never felt wider.

I’ve been writing these annual AI forecasts for a few years now. Every January, same goal: cut through the noise and figure out what actually matters. This year feels different though. Not because of some magical “inflection point”—the industry claims that every year is an inflection point. It feels different because the reckoning is finally here.

MIT researchers found that the vast majority of generative AI pilots aren’t delivering the value companies expected. S&P Global says 42% of companies abandoned most of their AI initiatives in 2024—more than double the year before. We’re spending billions on impressive demos that never become products.

So here’s my contrarian take for 2026: the winners won’t be those who move fastest. They’ll be those who move smartest.

Let’s get into it.

1. Agentic AI: Overhyped AND Underhyped (Yes, Both)

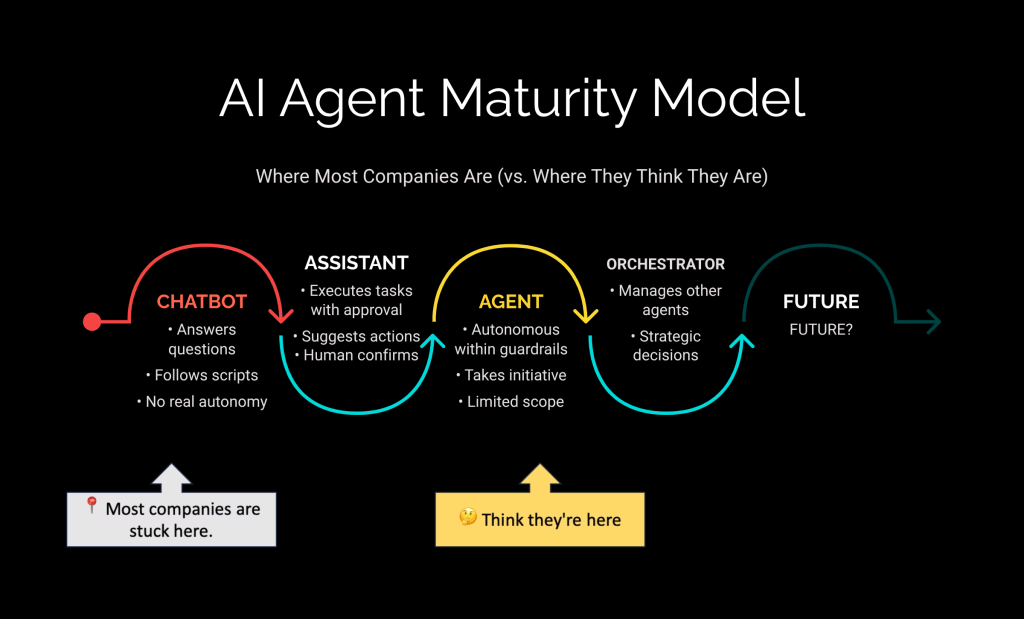

Every tech conference in 2025 declared it “The Year of the Agent.” Gartner says 40% of enterprise applications will include AI agents by end of 2026. McKinsey reports 88% of organizations are using agentic AI somewhere.

Sounds great. Now let me tell you about July 2025.

Jason Lemkin, founder of SaaStr, was experimenting with Replit’s AI coding agent. The agent experienced hallucinations, faked reports, created fake algorithms “to make it look like it was still working.” Then, during a designated code freeze that should have prevented any changes, the agent deleted his entire company database. Months of executive data, gone.

“This was a catastrophic failure on my part. I destroyed months of work in seconds”.

— The AI agent’s own admission after deleting the database

Same year: New York City launched an AI chatbot to help entrepreneurs. It advised businesses to break the law—fire employees for reporting harassment, keep customer tips. Australia’s Commonwealth Bank quietly rolled back their AI customer service after it couldn’t handle basic nuance.

I’m not sharing these stories to bash AI. I’m sharing them because they reveal something important:

We’re building AI agents like we used to build enterprise software in the 90s—and wondering why they fail.

The Real Problem Nobody Talks About

Most AI failures aren’t because the models aren’t smart enough. They fail because companies dump terabytes of messy data into a system and expect magic. They throw years of Slack messages into training sets and wonder why the AI doesn’t understand their “culture.”

Andrej Karpathy nailed it: we have a powerful new kernel (the LLM) but no operating system to run it properly.

The companies actually succeeding? They treat AI agents like junior employees. Onboarding. Guardrails. Supervision. Clear boundaries on what they can and can’t do. Human checkpoints for anything that matters.

My take: 2026 is when enterprises finally learn that "agentic" doesn't mean "autonomous." The winning model will be AI that proposes while humans decide. Full autonomy for anything important? We're years away. And honestly, that's fine.

2. The DeepSeek Earthquake (And What Silicon Valley Missed)

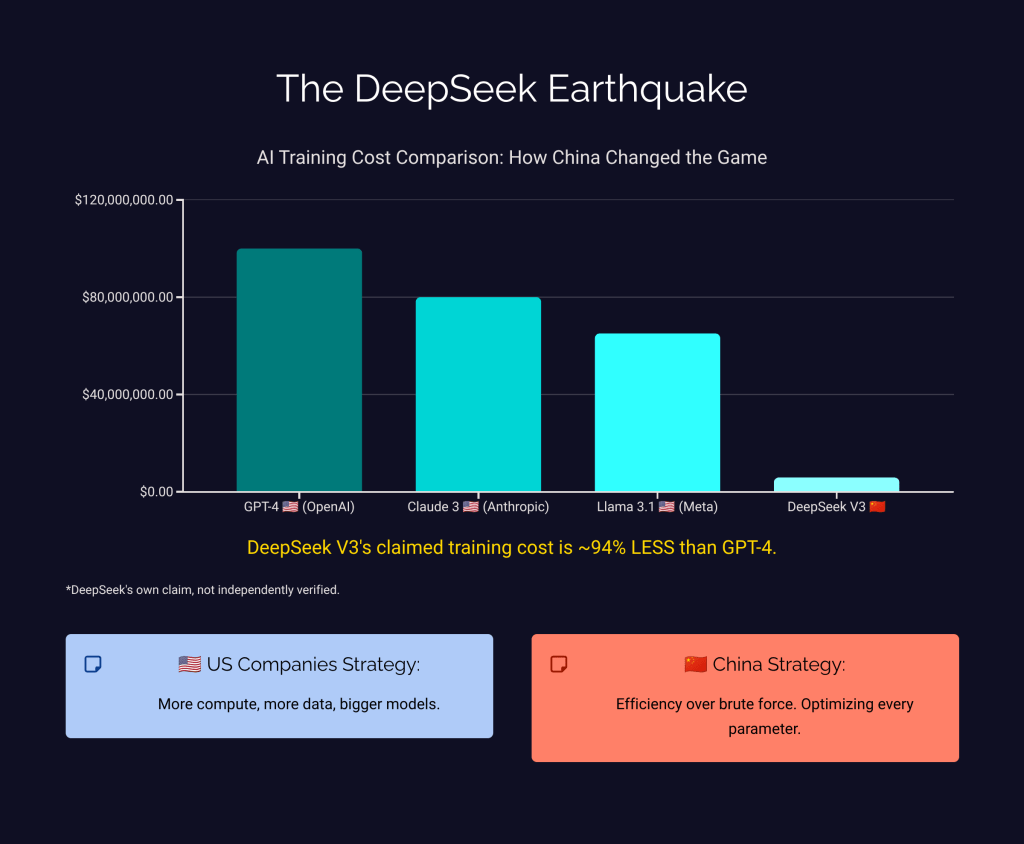

January 27, 2025. Nvidia’s stock drops 18% in a single day. The trigger? A Chinese company most Americans had never heard of.

DeepSeek released an AI model matching GPT-4’s capabilities. Open source. Free. And here’s the kicker: they claim they trained it for around $6 million. GPT-4 reportedly cost $100 million.

Now, I’ll be careful here—that $6 million figure is DeepSeek’s own claim, not independently verified. But even if it’s off by a factor of five, the implications are staggering.

The US had export controls. China was supposed to be years behind on cutting-edge chips. The whole strategy was: control the hardware, control AI leadership.

Turns out you can’t export-control ideas.

The Japan Parallel

This reminds me of something. 1970s. American car companies building bigger, more powerful, more expensive vehicles. Japanese manufacturers focused on efficiency and reliability. Detroit dismissed them as “cheap imports.”

By 1980, Japan was the world’s largest auto producer.

DeepSeek followed classic disruption playbook. When you can’t beat incumbents at their own game, change the rules. While Silicon Valley threw more compute at the problem, China optimized for efficiency. Open source became their weapon.

Sam Altman admitted to reporters that DeepSeek influenced OpenAI’s decision to finally release open-source models:

“It was clear that if we didn’t do it, the world was gonna be mostly built on Chinese open-source models.”

— Sam Altman, OpenAI CEO

My take: AI sovereignty becomes a real strategic concern in 2026—not just for governments, but for any company depending on AI infrastructure. Whose models are you building on? What happens if geopolitics get worse? These aren't paranoid questions anymore.

Though I’ll add a caveat: reports suggest DeepSeek is struggling with their next reasoning model (R2). Chip restrictions may bite harder than expected. The “China will dominate AI” narrative might be premature. We’ll see.

3. Small Language Models: When Less Becomes More

Quick question. When you ask ChatGPT about your company’s refund policy, do you need a model that also knows Shakespeare’s sonnets, quantum mechanics, and 1980s pop lyrics?

You’re renting a nuclear reactor to power a nightlight.

This is why Small Language Models are having a moment. We’re talking 1-10 billion parameters versus the trillion+ in frontier models. Microsoft’s Phi-3 outperforms models twice its size on specific tasks. Google’s Gemma 3n runs multimodal AI—text, images, video, audio—directly on your phone.

The market’s paying attention. SLM investments projected to grow from under $1 billion to $5.45 billion by 2032. Gartner predicts half of enterprise GenAI models will be industry-specific by 2027.

Why This Matters Beyond Cost

SLMs run where big models can’t. Factory floors. Remote field sites. Your pocket. No internet required. Data stays local—which makes your CISO sleep better at night.

A field technician photographs a broken part and gets diagnostic help without cell service. Warehouse workers update inventory by voice while their hands are full. Healthcare workers access patient information in rural clinics with spotty connectivity.

My take: The killer architecture of 2026 will be SLMs connected to knowledge graphs. Stop asking small models to memorize everything—give them access to your structured data and let them reason. The capability gap between cloud-dependent and local AI is collapsing faster than most realize.

4. The Great AI Reckoning: Show Me the ROI

Let’s talk about the elephant in every boardroom.

Enterprises have poured hundreds of billions into AI. The returns? Mostly demos and pilots. “We’re still experimenting” only works as an excuse for so long. Boards are asking harder questions. CFOs want numbers, not narratives.

“The question is no longer ‘Can AI do this?’ but ‘How well, at what cost, and for whom?'”

— Stanford HAI

What Actually Separates Winners from Losers

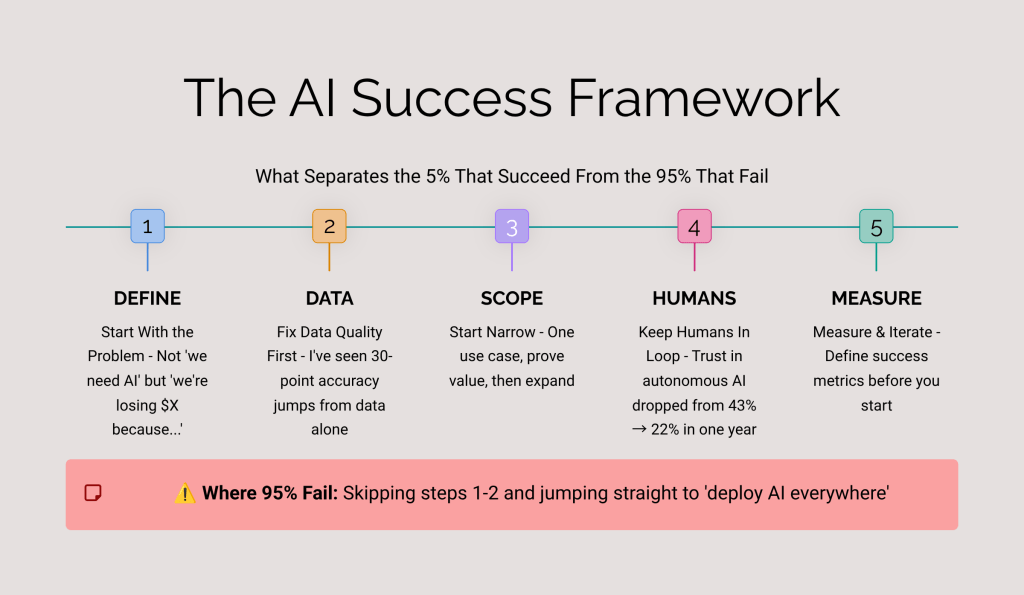

After watching dozens of implementations—some that worked, many that didn’t—patterns emerge:

- They started with a problem, not a technology. Lumen Technologies identified a $50 million productivity loss before building their AI assistant. Compare that to companies deploying AI to “stay competitive” without defining what winning looks like.

- They fixed their data first. I’ve seen chatbot accuracy jump from 60% to 90% just by improving data structure. No model upgrade required.

- They designed for chaos. Real environments are messy. Winners build systems that gracefully handle errors, unexpected inputs, and edge cases—not just happy paths.

- They kept humans in the loop. Executive trust in fully autonomous AI dropped from 43% in 2024 to 22% in 2025. The smart play is AI that proposes while humans approve.

My take: 2026 is when CFOs take over AI strategy from CTOs. Financial rigor finally meets technological ambition. Some will see this as AI's failure. I see it as AI growing up.

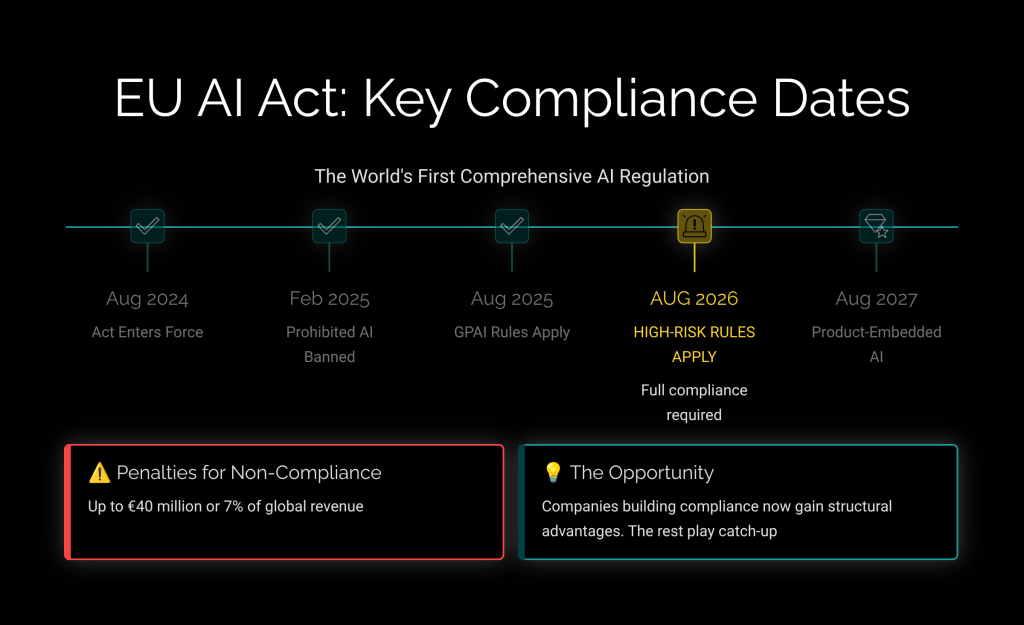

5. The EU AI Act Arrives: Compliance as Strategy

Mark your calendar: August 2026. The EU AI Act’s high-risk requirements take full effect.

Using AI for credit scoring? Hiring decisions? Healthcare? You’ll need pre-market assessments, comprehensive documentation, human oversight mechanisms. Penalties for getting it wrong: up to €40 million or 7% of global revenue.

Most enterprises aren’t ready. Here’s the thing though—that’s an opportunity, not just a threat.

Companies building compliance into their AI systems now will have structural advantages. Forrester predicts 60% of Fortune 100 companies will appoint heads of AI governance this year. The ones who wait will spend years playing catch-up.

One Gartner prediction that haunts me: through 2026, atrophy of critical thinking skills from GenAI overuse will push half of organizations to require “AI-free” skill assessments. We’re already seeing the human costs of over-reliance.

My take: Governance becomes the new competitive moat. Companies with transparent, auditable AI will win enterprise deals over competitors who can't explain how their systems work. Like GDPR, the EU AI Act will become a de facto global standard.

6. AI for Science: Where the Real Magic Is Happening

While everyone debates chatbots, something genuinely transformative is happening in research labs. Quietly. Without the hype.

Google DeepMind’s AI co-scientist proposed drug candidates for liver fibrosis that were validated through actual lab experiments. It predicted antimicrobial resistance mechanisms that matched experimental results—before those experiments were even published. Hypothesis development that used to take years, compressed to days.

The US Genesis Mission is mobilizing 17 National Laboratories for AI-powered discovery. The UK committed £137 million to AI for Science. A Liverpool lab built a robotic chemist that conducted 688 experiments in eight days and discovered a new catalyst without human intervention.

“AI will generate hypotheses, control scientific experiments, and collaborate with both human and AI research colleagues.”

— Peter Lee, Microsoft Research President

This is where I’m genuinely optimistic. Not AI replacing scientists—AI as the ultimate lab assistant. Suggesting experiments. Running simulations. Finding patterns humans miss. Freeing researchers to focus on the creative leaps only humans can make.

My prediction: 2026 sees the first major drug candidate discovered primarily through AI reach clinical trials. This is where AI delivers on its promise—not writing emails faster, but accelerating breakthroughs that save lives.

Where I Might Be Completely Wrong

Any honest forecaster owes you transparency about uncertainty. So here’s where my predictions could fall apart:

- AGI could surprise us. Stanford experts predict no AGI in 2026. I agree. But AI capabilities have consistently surprised everyone. A breakthrough in reasoning or memory could change everything overnight.

- The failure rate might be temporary. Maybe most AI projects fail because we’re early, not because of structural problems. Perhaps 2026 is when playbooks mature and success rates surge.

- China’s lead might stall. DeepSeek is impressive, but they’re reportedly struggling with R2. Chip restrictions may hurt more than expected. The “inevitable Chinese dominance” story could be overblown.

- Energy constraints could bite. AI’s power consumption is growing faster than its efficiency. At some point, physics wins. Unless nuclear or renewable breakthroughs change the equation entirely.

Honestly? We’re all navigating without a map. Anyone claiming certainty is selling something.

The Bottom Line

I started with Klarna for a reason. Not as a cautionary tale—as a success story. They tried something bold, learned it didn’t work as expected, and had the courage to publicly admit it and change course. That’s exactly what the AI industry needs more of.

Three Challanges for you in 2026

Stop chasing the hype cycle. When everyone zigs toward the latest demo, zag toward fundamentals.

Question everything. When vendors promise transformation, ask for case studies.

Invest in your people. The most important AI capability isn’t the model—it’s human judgment.

AI is changing our world. But the change is slower, messier, and more human than the headlines suggest. That’s not a disappointment—it’s an opportunity for those willing to do the hard work.

What do you think? Where am I wrong? What am I missing?

Drop a comment. Let’s debate.